Apache Kafka 4.2 on Ubuntu 24.04 on Azure User Guide

Overview

Apache Kafka is the leading open-source distributed event streaming platform — high throughput producers and consumers, exactly-once semantics, KRaft consensus (no ZooKeeper), and the queues for Kafka KIP-932 GA. The cloudimg image installs Kafka 4.2.0 (Scala 2.13 binary) from the official Apache CDN with SHA-512 verification, alongside OpenJDK 17. The broker runs in single-VM KRaft mode (combined broker + controller) with SASL/SCRAM-SHA-256 authentication. A per-VM cloudimg admin user is provisioned at first boot via Kafka 4's native kafka-storage.sh format --add-scram flag. Bundled alongside Kafka is AKHQ 0.26.0, the Apache 2.0 Web UI, on TCP 80 (proxied through nginx with HTTP basic auth) so operators get a real Kafka admin console out of the box.

What is included:

- Apache Kafka 4.2.0 (Scala 2.13) at

/opt/kafka - OpenJDK 17 JRE headless from Ubuntu 24.04 noble main

- Single-VM KRaft cluster:

kafka.servicerunning broker+controller on the same JVM - SASL/SCRAM-SHA-256 listener on TCP 9092 for clients (auth-walled by

cloudimgadmin) - AKHQ 0.26.0 Web UI on TCP 80 with HTTP basic auth (nginx reverse proxy in front of AKHQ on

127.0.0.1:8080) - Pre-created

cloudimgtopic (1 partition, replication 1) - Per-VM

cloudimgpassword (32 hex chars) used for both Kafka SCRAM AND AKHQ basic auth - Credentials at

/stage/scripts/kafka-credentials.log - 24/7 cloudimg support

Prerequisites

Active Azure subscription, SSH key, VNet + subnet. Standard_B2s (4 GB RAM) is suitable for dev, test, single-tenant production streaming, edge/IoT brokers, and PoC workloads. For production raise to D4s/D8s and tune KAFKA_HEAP_OPTS in /etc/default/kafka. NSG inbound: allow 22/tcp from your management CIDR, 9092/tcp from your client CIDR (Kafka SASL/SCRAM clients), and 80/tcp from your operator CIDR (AKHQ Web UI).

Step 1-3: Deploy + SSH (standard pattern)

ssh azureuser@<vm-ip>

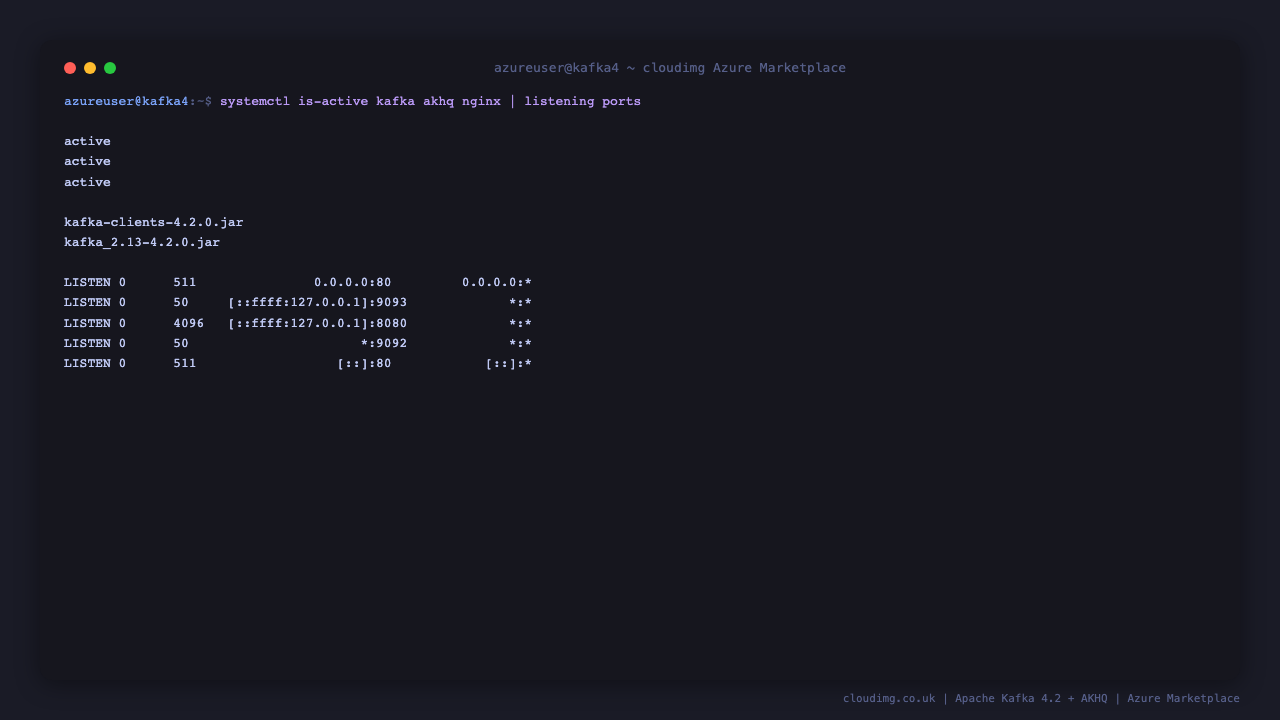

Step 4: Service Status + Version

sudo systemctl is-active kafka.service akhq.service nginx.service

ls /opt/kafka/libs/ | grep -E '^kafka-clients-' | head -1

Step 5: Read Per-VM Credentials

sudo cat /stage/scripts/kafka-credentials.log

Pick up KAFKA_BOOTSTRAP, KAFKA_ADMIN_USER, KAFKA_ADMIN_PASSWORD. The same password is used for the AKHQ Web UI basic auth.

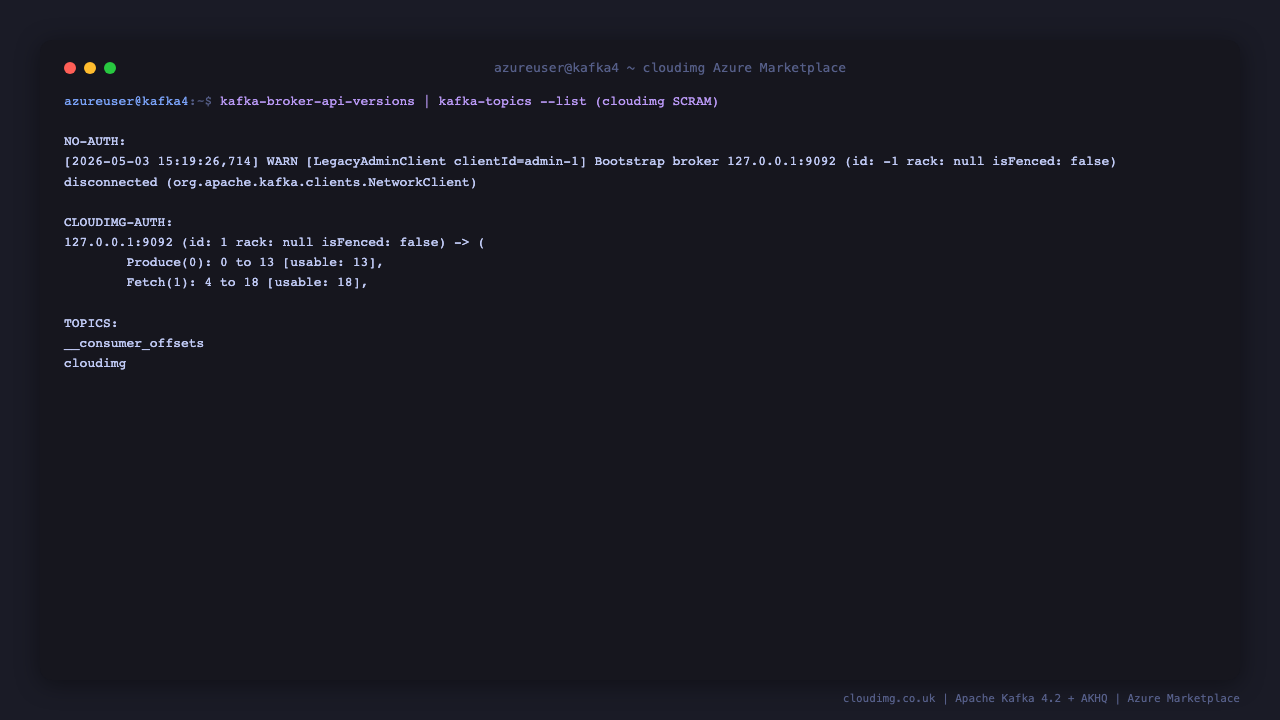

Step 6: Auth Wall + Broker API Versions

PASS=$(sudo grep '^KAFKA_ADMIN_PASSWORD=' /stage/scripts/kafka-credentials.log | cut -d= -f2-)

cat > /tmp/cloudimg.properties <<EOF

security.protocol=SASL_PLAINTEXT

sasl.mechanism=SCRAM-SHA-256

sasl.jaas.config=org.apache.kafka.common.security.scram.ScramLoginModule required username="cloudimg" password="${PASS}";

EOF

/opt/kafka/bin/kafka-broker-api-versions.sh --bootstrap-server 127.0.0.1:9092 --command-config /tmp/cloudimg.properties | head -3

The first call would fail without --command-config; with cloudimg SCRAM creds it returns the broker API matrix.

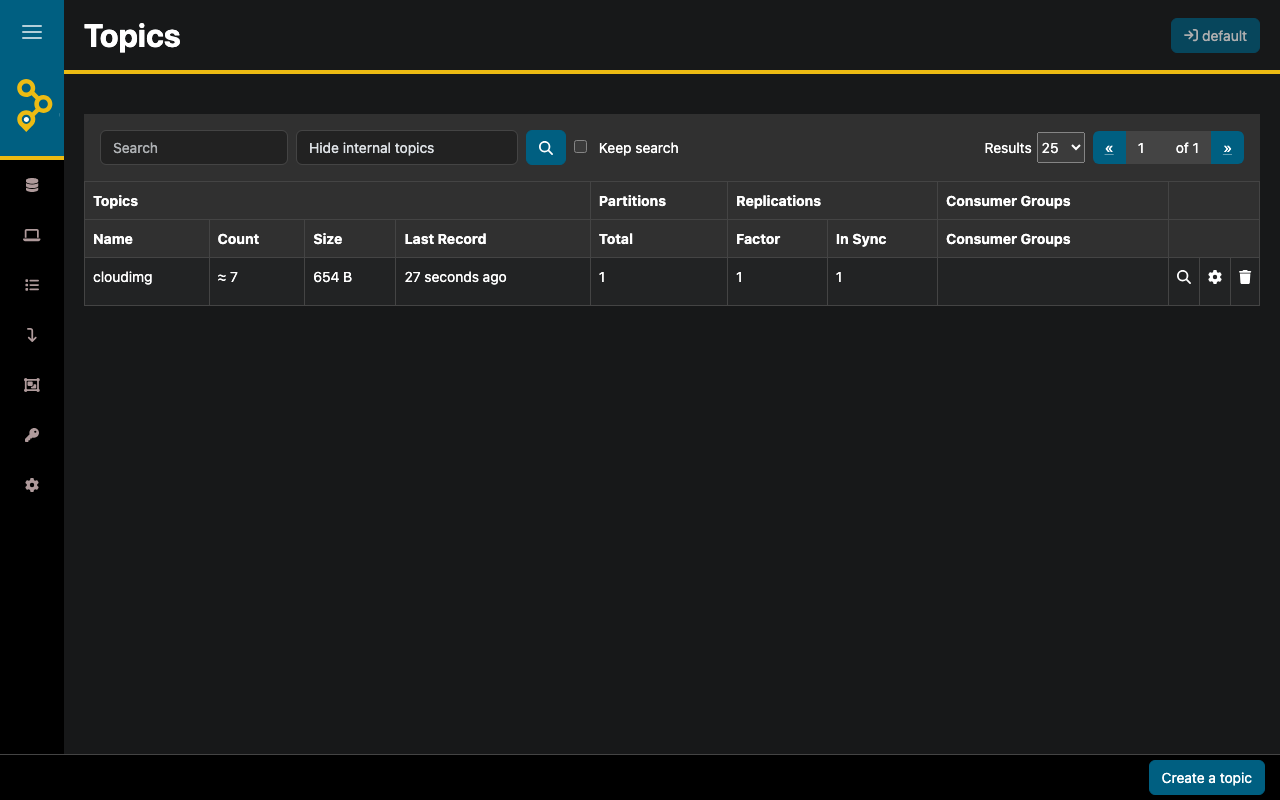

Step 7: AKHQ Web UI — Topic List

Browse to http://<vm-ip>/ and authenticate as cloudimg with the password from Step 5. The Topics tab lists every topic, including the pre-created cloudimg topic.

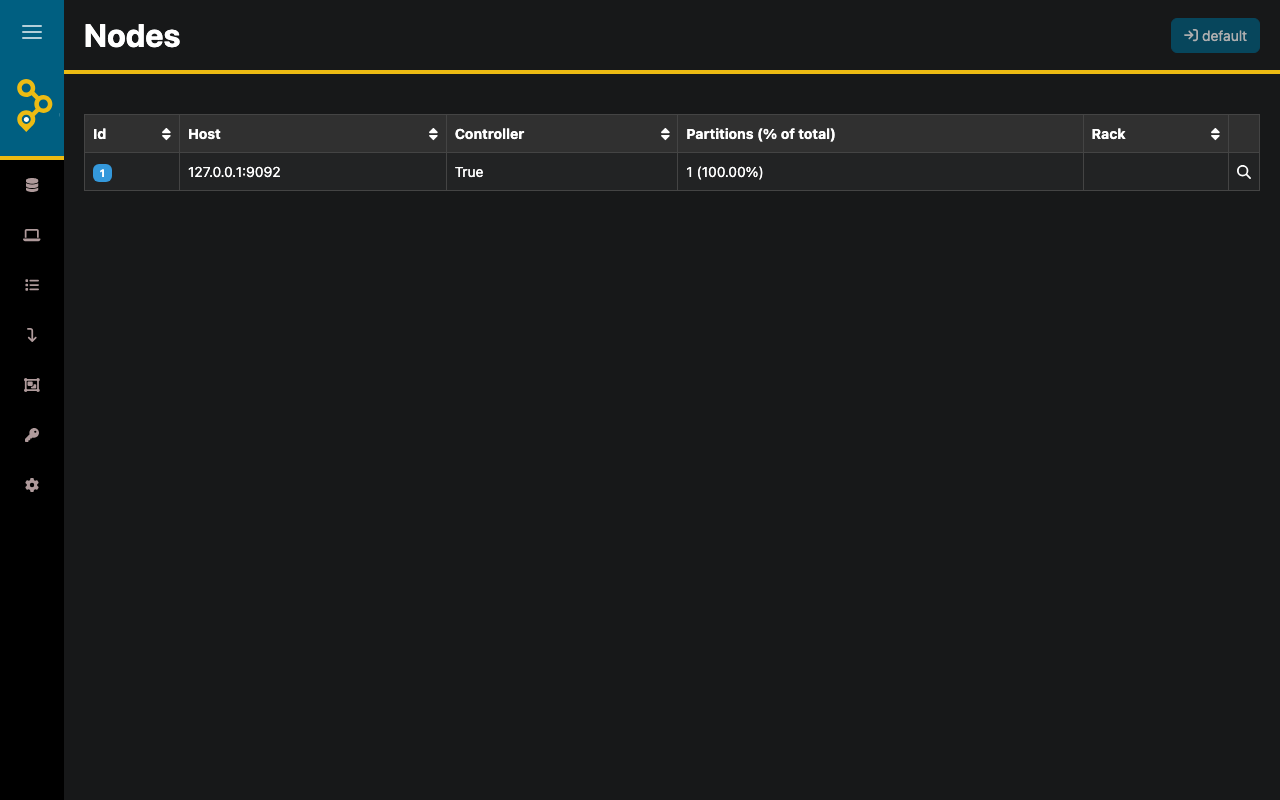

Step 8: AKHQ — Cluster Brokers

The Nodes tab shows the single broker with id 1, host 127.0.0.1:9092, and the controller leader.

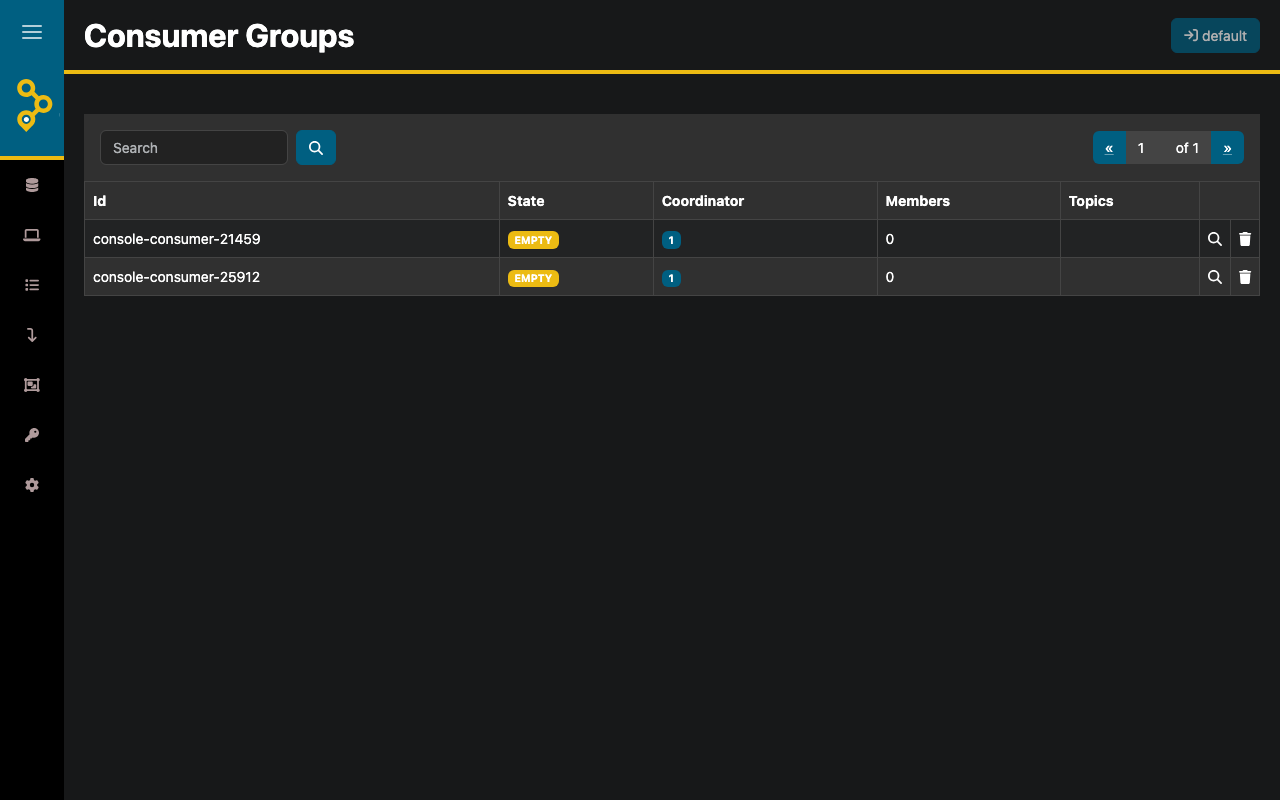

Step 9: AKHQ — Consumer Groups

The Consumer Groups tab will show consumer groups and their lag once you have consumers running. On a fresh deploy the list is empty until you produce + consume a message.

Step 10: Produce + Consume from the CLI

PASS=$(sudo grep '^KAFKA_ADMIN_PASSWORD=' /stage/scripts/kafka-credentials.log | cut -d= -f2-)

cat > /tmp/cloudimg.properties <<EOF

security.protocol=SASL_PLAINTEXT

sasl.mechanism=SCRAM-SHA-256

sasl.jaas.config=org.apache.kafka.common.security.scram.ScramLoginModule required username="cloudimg" password="${PASS}";

EOF

echo "hello cloudimg $(date +%s)" | /opt/kafka/bin/kafka-console-producer.sh --bootstrap-server 127.0.0.1:9092 --command-config /tmp/cloudimg.properties --topic cloudimg

/opt/kafka/bin/kafka-console-consumer.sh --bootstrap-server 127.0.0.1:9092 --command-config /tmp/cloudimg.properties --topic cloudimg --from-beginning --max-messages 1 --timeout-ms 10000

The producer pipes a single message; the consumer reads it back from offset 0.

Step 11: Create a New Topic

Replace <topic-name> with your topic name and <partitions> / <replication> with your desired numbers (replication can't exceed the number of brokers — keep at 1 for single-VM):

/opt/kafka/bin/kafka-topics.sh --bootstrap-server 127.0.0.1:9092 --command-config /tmp/cloudimg.properties --create --topic <topic-name> --partitions 1 --replication-factor 1

Step 12: Tune Heap

Edit /etc/default/kafka and restart:

sudo systemctl restart kafka.service

Defaults on Standard_B2s:

KAFKA_HEAP_OPTS:-Xms512m -Xmx1024m- G1GC tuning enabled

- Log directory:

/var/lib/kafka/data/logs - Metadata directory:

/var/lib/kafka/data/metadata

For production raise -Xmx to 4096m or higher on D4s/D8s instances.

Step 13: Add a Second AKHQ User

Replace <new-password> with the operator's chosen password:

sudo htpasswd -bB /etc/nginx/akhq.htpasswd alice <new-password>

sudo systemctl reload nginx

Each operator authenticates with their own basic-auth credential; the underlying Kafka cluster credentials remain the cloudimg SCRAM admin defined in /etc/akhq/application.yml.

Step 14: Add a Second Kafka SCRAM User

/opt/kafka/bin/kafka-configs.sh --bootstrap-server 127.0.0.1:9092 --command-config /tmp/cloudimg.properties --alter --add-config 'SCRAM-SHA-256=[password=<new-password>]' --entity-type users --entity-name <new-username>

Step 15: Backups

Kafka data lives at /var/lib/kafka/data/logs. Take a consistent backup by stopping the broker, copying the directory, and restarting:

sudo systemctl stop kafka.service

sudo tar czf /var/backups/kafka-$(date +%F).tgz -C /var/lib/kafka data

sudo systemctl start kafka.service

Periodically copy /var/backups to Azure Blob Storage (az storage blob upload-batch) for off-VM retention. For zero-downtime backups, add a second broker and use kafka-mirror-maker.sh.

Step 16: Logs and Troubleshooting

sudo journalctl -u kafka.service --no-pager -n 80

sudo journalctl -u akhq.service --no-pager -n 30

sudo journalctl -u nginx.service --no-pager -n 30

sudo ls /var/log/kafka/

Kafka writes its main log to /var/log/kafka/server.log, controller log to /var/log/kafka/controller.log. AKHQ's stdout goes to the systemd journal. nginx access log is at /var/log/nginx/access.log and error at /var/log/nginx/error.log.

Security

- SASL/SCRAM-SHA-256 enforced on the broker listener — no anonymous client connections accepted

- AKHQ Web UI bound to

127.0.0.1:8080— direct port access from the network is impossible - nginx auth wall on

:80enforces HTTP basic authentication - Per-VM

cloudimgpassword used for both Kafka SCRAM AND AKHQ basic auth — single rotation - Restrict NSG inbound on

:9092and:80to the IP ranges that need them - Terminate TLS at an upstream Application Gateway / Front Door, or enable Kafka's own SASL_SSL listener via

server.properties+ a JKS keystore - Controller listener bound to

127.0.0.1:9093(loopback only) — not exposed to the network

Support

cloudimg provides 24/7/365 expert technical support. Guaranteed response within 24 hours, one hour average for critical issues. Contact support@cloudimg.co.uk.